Data Flow Architecture

Benefits for Your Organization

By partnering with MOST Programming for data flow architecture, organizations experience

Improved Performance

Faster access to real-time data, enabling proactive decision-making.

Enhanced Collaboration

Centralized systems provide company-wide access to consistent and reliable data.

Reduced Costs

Efficient automation reduces the need for manual processes, saving time and resources.

Future-Ready Systems

Scalable solutions that prepare businesses for growth and technological advancements.

Data Flow Architecture Services

In today’s fast-paced digital landscape, businesses require seamless, accurate, and secure data movement across their systems to remain competitive. At MOST Programming, we specialize in designing data flow architectures that serve as the backbone of an organization’s operations. Our comprehensive services ensure that data is efficiently collected, transferred, and integrated across platforms, enabling businesses to unlock real-time insights, streamline processes, and drive better decision-making.

A well-designed data flow architecture ensures that organizations avoid data silos, eliminate bottlenecks, and maintain a consistent flow of information. With our expertise, we create streamlined data pipelines that handle the movement of large volumes of data across departments, tools, and platforms. By leveraging modern technologies and best practices, we enable businesses to utilize their data optimally for analytics, reporting, and automation purposes.

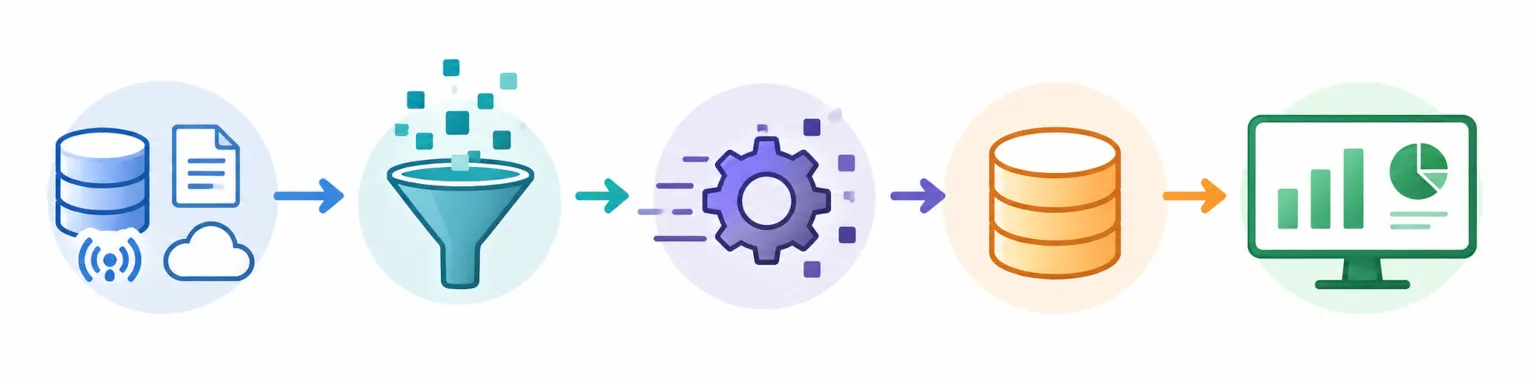

How We Achieve Seamless Data Flow

Our approach to data flow architecture begins with a deep understanding of the client’s goals, existing systems, and challenges. MOST Programming designs customized frameworks that align with organizational needs. By analyzing workflows, infrastructure, and data consumption patterns, we build pathways that ensure:

- Efficiency: Our architectures minimize delays, ensuring data is available in real time to support critical decisions. We use advanced data processing tools to accelerate data transfers and reduce redundancy.

- Scalability: As businesses grow, so do their data needs. We design solutions that adapt to increasing data volumes, allowing seamless scaling without disruptions.

- Security: We prioritize data protection through role-based access controls (RBAC), encryption, and compliance with industry standards. This ensures that sensitive data remains secure while allowing transparency where needed.

Leveraging Cutting-Edge Technologies

MOST Programming utilizes industry-leading tools and platforms to create robust data flow systems. Whether it’s real-time streaming, cloud-based integrations, or batch processing, our experts select technologies that align with your business model. Our solutions often incorporate:

- ETL Tools (Extract, Transform, Load): These streamline the movement and transformation of data.

- Cloud Platforms: Solutions like Azure, AWS, and Google Cloud for centralized, scalable data handling.

- APIs and Integrations: Seamlessly connecting various systems to facilitate uninterrupted data transfer.

Our Commitment

At MOST Programming, we don’t just build systems; we deliver solutions that drive operational excellence. Our dedicated team works closely with clients to design, monitor, and maintain architectures that continuously improve efficiency and performance. From small-scale projects to enterprise-level transformations, we ensure your data moves where it needs to go—quickly, securely, and efficiently.

With MOST Programming’s data flow architecture services, your organization gains a reliable foundation for analytics, automation, and innovation, helping you stay ahead in an ever-changing business environment.

Related Cases

Cases related to Data Flow Architecture solution

Data Flow Architecture: Complete Guide to Modern Data Processing Systems

Introduction

Data flow architecture is a computing paradigm where instruction execution depends on data availability rather than program counters, enabling systems to process operations the moment their input data becomes ready. This approach fundamentally restructures how software systems handle data processing by treating the entire system as a sequence of transformations applied to data streams.

Data flow programming is a coding approach that leverages data flow architecture to design systems that manage, process, and transform data as it moves through various stages in data pipelines, supporting robust data governance and transformation.

This guide covers architectural patterns, implementation strategies, tools, and real-world applications of dataflow architecture while excluding low-level hardware design details. The target audience includes software architects, data engineers, and system designers seeking to implement scalable data processing systems. Whether you’re building real time analytics platforms, ETL pipelines, or stream processing capabilities, understanding data flow principles helps you create responsive, parallel systems that handle modern workloads efficiently. Data flow architecture also enables real time insights, allowing immediate data analysis for timely decision-making in applications such as event-driven architectures, IoT, and financial trading.

Direct answer: Data flow architecture enables parallel execution by processing instructions when input data becomes available, contrasting with traditional sequential control flow architecture where a controller unit dictates execution order. This data-driven model eliminates bottlenecks inherent in von Neumann designs and supports both batch and streaming workloads.

Data flow diagrams (DFDs) play a crucial role in visualizing how information moves within a computer network, making it easier to model and evaluate networked systems. Enhanced clarity and collaboration in data flow architectures improve understanding between technical and non-technical teams through DFDs.

By the end of this guide, you will:

- Understand core principles of data-driven execution and how data moves through processing stages

- Recognize key architectural patterns including static, dynamic, and synchronous dataflow models

- Select appropriate tools and frameworks for your specific latency requirements

- Implement efficient data flow solutions using modern technology stacks

- Overcome common challenges in memory management, load balancing, and debugging complex systems

Understanding Data Flow Architecture Fundamentals

Data flow begins with the ingestion of data from a given source, which can include both structured and unstructured data.

Data flow architecture structures software systems around the movement and transformation of data rather than sequential instruction execution. In this paradigm, data enters from sources, passes through processing modules via defined connections like pipes or buffers, and reaches a final destination or output sink. Execution sequences are the key steps that dictate how data moves through the system, managing the flow from one processing module to another. This approach promotes data integrity and enables high throughput by allowing multiple transformations to proceed in parallel.

The relevance to modern distributed systems cannot be overstated. As organizations process streaming data from IoT sensors, user interactions, and business processes, traditional control flow architecture creates bottlenecks. Dataflow architecture addresses these limitations by executing operations only when their operands are available, enabling real time processing at scale.

Data Flow Programming and Data-Driven Execution Model

In data-driven execution, instructions fire when all input data becomes available rather than following sequential program flow dictated by a program counter. Each processing unit monitors its inputs and begins computation immediately upon receiving the required data tokens. This eliminates the von Neumann bottleneck where a single controller unit sequences all operations.

This model directly enables parallel execution across compute resources. When incoming data arrives at multiple independent processing stages simultaneously, each stage can execute without waiting for unrelated operations to complete. The result is system performance that scales with available hardware rather than being constrained by sequential dependencies.

Core Components and Data Tokens

The key components of data flow systems include data sources that initiate flow, filters or processors that transform data incrementally, pipes that serve as unidirectional FIFO buffers for streaming bytes or structured records, and data sinks that store output data in a data warehouse or other target system. Filter architecture is a system design pattern that emphasizes modularity and processing stages, making it well-suited for parallel processing and maintainability. Compared to other architectures like batch sequential, filter architecture allows for more flexible and scalable data processing pipelines.

Data tokens represent values flowing through the system, carrying both the data itself and metadata indicating destination processing units. Dependency management mechanisms track which instructions have received all required tokens and are ready to fire. This relationship between system components enables the data-driven execution model by ensuring operations execute precisely when their prerequisites are satisfied.

Understanding these fundamentals prepares you to evaluate specific architectural patterns and their applications to different data processing scenarios.

Data Flow Diagrams

Data flow diagrams (DFDs) deliver powerful visualization capabilities that transform how you understand and optimize data movement within your systems. By providing clear graphical representations of how data travels from input sources through processing stages to output destinations, DFDs enable your teams to grasp, document, and enhance data flow across even the most complex architectures. These diagrams break down your system into external entities, processes, data stores, and data flows, helping you quickly identify bottlenecks, redundancies, or inefficiencies that may be costing you time and resources.

When it comes to data integration challenges, DFDs prove invaluable for mapping how information exchanges between your different subsystems or external partners. They drive clear communication among your stakeholders, streamline troubleshooting efforts, and guide you toward designing scalable, efficient data flow systems that actually work. By leveraging DFDs early in your development process, you can ensure that your data architecture supports robust data movement, maintains high data quality, and achieves seamless integration with your existing systems.

Ultimately, DFDs empower you and your engineering teams to make strategic, informed decisions about system improvements, ensuring that your data flow aligns perfectly with your business goals and technical requirements while delivering real-world results.

Data Flow Architecture Patterns and Models

Building on the fundamental concepts of data-driven execution, several distinct patterns have emerged to address different latency requirements and processing characteristics. One traditional approach is the Batch Sequential model, where each data transformation subsystem starts only after the previous subsystem completes. In this model, data flows through a series of subsystems, with one subsystem beginning its processing only after the previous subsystem has finished. This sequential nature ensures data integrity but can introduce bottlenecks and processing delays. Each pattern makes specific tradeoffs between flexibility, performance, and complexity.

Static vs Dynamic Dataflow Machines

Static dataflow architectures use conventional memory addresses for data tokens, limiting parallel execution to cases where token destinations can be determined at compile time. Early implementations like MIT’s static dataflow machines demonstrated the concept but faced constraints when handling recursive functions or data-dependent control flow.

Dynamic dataflow architectures overcome these limitations using content-addressable memory that enables unlimited parallelism. The Manchester Dataflow Computer project in the 1980s explored this approach, allowing tokens to carry tags that match them with waiting instructions regardless of memory location. This enables more detail in handling complex systems with recursive algorithms and dynamic data structures, though it requires more sophisticated hardware or runtime support.

Synchronous Dataflow Architectures

Synchronous dataflow provides deterministic execution models essential for real time data processing with strict timing guarantees. In these systems, the number of data tokens produced and consumed by each processing unit is known at compile time, enabling static scheduling and resource allocation.

This predictability makes synchronous dataflow ideal for digital signal processing, embedded systems, and real time streaming analytics where low latency is critical. Load balancing and resource management become simpler because the flow of data follows predictable patterns. However, this determinism comes at the cost of flexibility—synchronous systems cannot easily adapt to variable-rate data sources or data-dependent processing.

Out-of-Order Execution Integration

Modern CPUs implement restricted dataflow concepts within execution windows, allowing instructions to execute as soon as their operands are available rather than strictly in program order. This hybrid approach, called out-of-order execution, brings dataflow benefits to conventional architectures without requiring entirely new programming models.

In practical applications and abstractions, a Level 0 data flow diagram (DFD) often represents the entire system's data interactions as a single process before breaking it down into more detailed subprocesses. This high-level view helps in understanding and managing execution at different granularities.

Practical applications extend to digital signal processing in audio and video pipelines, network routing where packets flow through transformation stages, and graphics processing where parallel execution of shader operations follows dataflow principles. Apache Spark and similar frameworks apply these concepts at the software level for batch data processing at scale.

Pattern comparison summary:

| Pattern | Parallelism | Predictability | Flexibility | Best For |

|---|---|---|---|---|

| Static Dataflow | Limited | Moderate | Low | Simple pipelines |

| Dynamic Dataflow | Unlimited | Low | High | Complex algorithms |

| Synchronous Dataflow | Scheduled | High | Low | Real time processing |

| Hybrid/Out-of-Order | Windowed | Moderate | Moderate | General computing |

These patterns inform implementation choices, guiding decisions about compilers, runtimes, and infrastructure for your specific use cases.

Control Flow Architecture

Control flow architecture delivers a foundational approach for your software engineering projects, organizing system behavior around explicit sequencing of operations that you can control and manage. Unlike data flow architecture, which relies on data availability and movement to drive processes, control flow architecture gives you a central controller or scheduler that dictates exactly when and how your tasks execute. This approach works exceptionally well for your applications where operational order and workflow management are critical, such as embedded systems, operating systems, and web applications that require precise control.

However, when your data moves through increasingly complex and high-throughput environments, control flow architecture can create bottlenecks that impact your performance. Your systems often struggle to keep pace with real-time processing demands and large-scale data movement, as tasks may be forced to wait for controller instructions rather than proceeding immediately when data becomes available. This limitation can reduce your scalability and responsiveness, especially when your business requires immediate insights or low-latency data processing capabilities.

Despite these challenges, control flow architecture remains highly relevant for your business processes and legacy systems that demand structured workflow management. Understanding these strengths and limitations helps you choose the right architectural approach for your specific needs, enabling you to balance workflow control with efficient data flow and parallel execution that drives your business forward.

Data Integration and Movement

Data integration and movement form the backbone of your effective data flow systems, giving you the competitive edge you need in today's data-driven landscape. Data integration combines data from your multiple sources to deliver a unified, accurate, and accessible view that drives powerful processing and analysis. You can achieve this through cutting-edge techniques such as extract, transform, load (ETL) processes, data virtualization, and data replication, each enabling seamless data flow across your diverse platforms and applications.

Data movement takes your strategy further by transferring data between systems, whether within your local network or across distributed environments. Advanced technologies like FTP, SFTP, and REST APIs ensure secure and reliable data movement, guaranteeing that your data is available exactly where and when you need it. Efficient data movement becomes critical for maintaining your data quality, supporting real-time analytics, and enabling the informed decision-making that keeps you ahead of the competition.

By implementing robust data integration and movement strategies, you break down data silos, enhance data flow across your enterprise, and ensure that your data flow systems deliver the timely, actionable insights that drive real results.Your organization gains the ability to harness the full potential of your data infrastructure, transforming complex data challenges into strategic advantages that fuel growth and innovation.

Batch and Streaming Processing

Modern data processing empowers your organization through two primary paradigms that drive strategic advantage: batch processing and streaming processing. Batch processing harnesses large volumes of data collected over time, transforming it in comprehensive chunks that deliver actionable insights. This powerful approach enables your business to excel in data warehousing, periodic reporting, and historical analytics, where high throughput and deep data transformation create competitive edge and informed decision-making capabilities.

In contrast, streaming processing revolutionizes how your organization responds to data as it flows in real-time, enabling immediate analytics and instant response to critical events. This cutting-edge approach becomes essential for your success in IoT sensor monitoring, financial transaction analysis, and real-time decision making, where low latency and continuous data flow drive superior business outcomes and keep you ahead in today's fast-paced market.

Your organization can harness the full potential of both batch and streaming processing to address comprehensive data processing challenges and maximize strategic value. By leveraging these tailored approaches, you ensure that your data systems operate at peak efficiency—whether processing large datasets for deep strategic analysis or delivering real-time insights for instant competitive advantage—ultimately driving results that transform your data flow systems into powerful business assets.

Implementation Strategies and Technology Stack

Translating architectural patterns into working systems requires careful attention to compiler design, runtime mechanisms, and infrastructure choices. The gap between theoretical dataflow models and production deployments involves practical decisions about software engineering tradeoffs.

When implementing data flow architecture, it is crucial to enforce robust data access controls. This includes restricting access to sensitive information, applying encryption, and maintaining audit measures to ensure only authorized users can view or manipulate data.

Additionally, implementing strict ETL pipelines helps organizations maintain consistent data across various applications, departments, and uses, supporting reliable and unified data processing throughout the system.

Compiler and Runtime Design

Dataflow compilation approaches prove most beneficial when processing stages have clear data dependencies and opportunities for parallel execution. Implementation involves several key elements:

- Embed data dependency tags in compiled binaries, annotating each operation with its required inputs so the runtime can determine when instructions are ready to fire.

- Implement instruction firing mechanisms that monitor operand availability and dispatch operations to available compute resources immediately when prerequisites are satisfied.

- Design token management systems for efficient data flow through processing networks, including memory allocation strategies that minimize copying as data moves between stages.

- Establish synchronization protocols that prevent race conditions when multiple parallel paths must merge their results, ensuring data consistency without sacrificing throughput.

Modern frameworks like Apache Beam pipelines abstract these details, allowing developers to express data transformation logic while the runtime handles scheduling and resource allocation across batch and streaming execution modes.

Hardware vs Software Implementation Comparison

| Criterion | Hardware Implementation | Software Implementation |

|---|---|---|

| Performance | Maximum performance with dedicated processing units | Limited by underlying architecture, more flexible deployment |

| Development Cost | High upfront investment, requires specialized expertise | Lower cost, leverages existing systems and cloud platforms |

| Scalability | Fixed capacity, hardware upgrades required | Elastic scaling via fully managed service options |

| Use Cases | DSP, graphics, high-frequency trading | Data pipelines, stream processing, ETL workflows |

| Error Handling | Complex recovery, often requires redundancy | Flexible retry policies, checkpoint-based recovery |

Software implementations offer greater flexibility for most enterprise applications. Platforms like Google Cloud Dataflow provide a fully managed platform that handles auto-scaling to meet variable workloads, while hardware implementations deliver maximum performance for specialized use cases like encrypting data at line rate or processing high throughput financial transactions.

The choice between approaches often depends on latency requirements. Software pipelines typically introduce higher latency than dedicated hardware but support rapid iteration and integration with existing systems. For real time decision making with sub-millisecond requirements, hardware acceleration may be necessary.

These implementation choices connect directly to operational challenges that teams encounter when deploying dataflow systems at scale.

Data Consistency and Quality

Delivering data consistency and quality forms the cornerstone of any successful data flow system that drives competitive advantage. Data consistency ensures your information remains accurate and uniform as it progresses through different processing stages and across various systems, enabling you to make strategic decisions with confidence. Advanced techniques such as data validation, normalization, and standardization help you maintain this consistency, preventing costly errors and discrepancies that could undermine your downstream analytics or critical business processes, giving you the edge needed to stay ahead in today's competitive landscape.

Data quality centers on ensuring the reliability, completeness, and accuracy of your data assets, empowering you to extract maximum value from your information systems. Essential practices like data profiling, cleansing, and certification enable you to identify and correct issues before they impact your real time processing or actionable insights, helping you maintain the competitive advantage that high-quality data provides. Superior data quality allows your organization to trust your data completely, make informed strategic decisions, and harness the full potential of your data flow systems to drive meaningful business results.

By prioritizing data consistency and quality, your organization can build efficient, scalable, and reliable data flow systems that deliver exceptional value through real time analytics, seamless data integration, and high-quality decision-making across your entire operational framework. These advanced capabilities enable you to stay ahead in an increasingly competitive marketplace while maximizing the strategic value of your data investments.

Common Challenges and Solutions

Production deployments of dataflow architecture encounter practical difficulties that require deliberate design and tooling. Understanding these challenges upfront enables more robust implementations.

Memory Management and Token Storage

As data arrives continuously, token storage can grow unbounded without proper management. Implement content-addressable memory systems for dynamic token storage that efficiently match incoming tokens with waiting instructions. Design garbage collection mechanisms that reclaim memory immediately after data tokens are consumed.

For batch processing workloads, checkpoint strategies allow the system to persist intermediate state to a data store, enabling recovery without reprocessing the entire pipeline. Stream processing requires more aggressive memory management to maintain low latency under sustained throughput.

Load Balancing and Resource Allocation

Uneven data distribution across parallel execution units creates bottlenecks that undermine the performance benefits of dataflow architecture. Design dynamic load distribution algorithms that monitor queue depths and redirect incoming data to underutilized processors.

Implement backpressure mechanisms that slow data sources when downstream stages cannot keep pace. This prevents memory exhaustion while maintaining data quality by ensuring no records are dropped. Modern frameworks like Apache Flink handle backpressure automatically, propagating rate limits upstream through the processing network.

Debugging and System Visibility

The non-deterministic execution order in dataflow systems makes traditional debugging approaches ineffective. Create comprehensive logging for data token flows, recording timestamps and processing unit assignments for each transformation.

Build visual debugging tools that provide graphical representation of execution paths through the system. A data flow diagram showing real time progress helps operators identify performance issues and trace anomalies. These tools prove essential for complex systems where multiple parallel paths process data simultaneously.

Consider implementing distributed tracing that follows individual records from data sources through all processing stages to their final destination. This visibility enables root cause analysis when data transformation produces unexpected results.

Conclusion and Next Steps

Dataflow architecture enables superior parallelism and responsiveness for data-intensive applications by executing operations when input data becomes available rather than following predetermined sequences. This fundamental shift supports everything from real time analytics generating immediate insights to batch data processing handling petabyte-scale transformations. Real-time analytics powered by data flow architecture is critical for applications such as financial trading platforms and fraud detection.

Immediate next steps:

- Assess current system bottlenecks by identifying where sequential processing limits throughput

- Evaluate dataflow patterns against your specific use cases—synchronous for real time processing, dynamic for variable workloads

- Prototype with software implementations using frameworks like Apache Beam or Apache Spark before considering hardware solutions

- Implement observability from the start, building data flow diagram visualization into your pipeline design

- Ensure all data, especially sensitive or personally identifiable information, is encrypted during transfer and storage to maintain security and privacy compliance.

For comprehensive system design, explore related areas including stream processing frameworks that handle both batch and streaming workloads, real time analytics platforms providing actionable insights from streaming data, and parallel computing architectures that complement dataflow approaches for specific processing stages. Understanding data integration patterns will help connect dataflow pipelines with external entities including data warehouses, information systems, and context diagram representations for stakeholder communication.